Posted by Chinmay Kulkarni, Stanford University Ph.D candidate and former Google Intern, and Ed H. Chi, Google Research Scientist

News is one of the most important parts of our collective information diet, and like any other activity on the Web, online news reading is fast becoming a social experience. Internet users today see recommendations for news from a variety of sources; newspaper websites allow readers to recommend news articles to each other, restaurant review sites present other diners’ recommendations, and now several social networks have integrated social news readers.

With news article recommendations and endorsements coming from a combination of computers and algorithms, companies that publish and aggregate content, friends and even complete strangers, how do these explanations (i.e. why the articles are shown to you, which we call “annotations”) affect users’ selections of what to read? Given the ubiquity of online social annotations in news dissemination, it is surprising how little is known about how users respond to these annotations, and how to offer them to users productively.

In All the News that’s Fit to Read: A Study of Social Annotations for News Reading, presented at the 2013 ACM SIGCHI Conference on Human Factors in Computing Systems and highlighted in the list of influential Google papers from 2013, we reported on results from two experiments with voluntary participants that suggest that social annotations, which have so far been considered as a generic simple method to increase user engagement, are not simple at all; social annotations vary significantly in their degree of persuasiveness, and their ability to change user engagement.

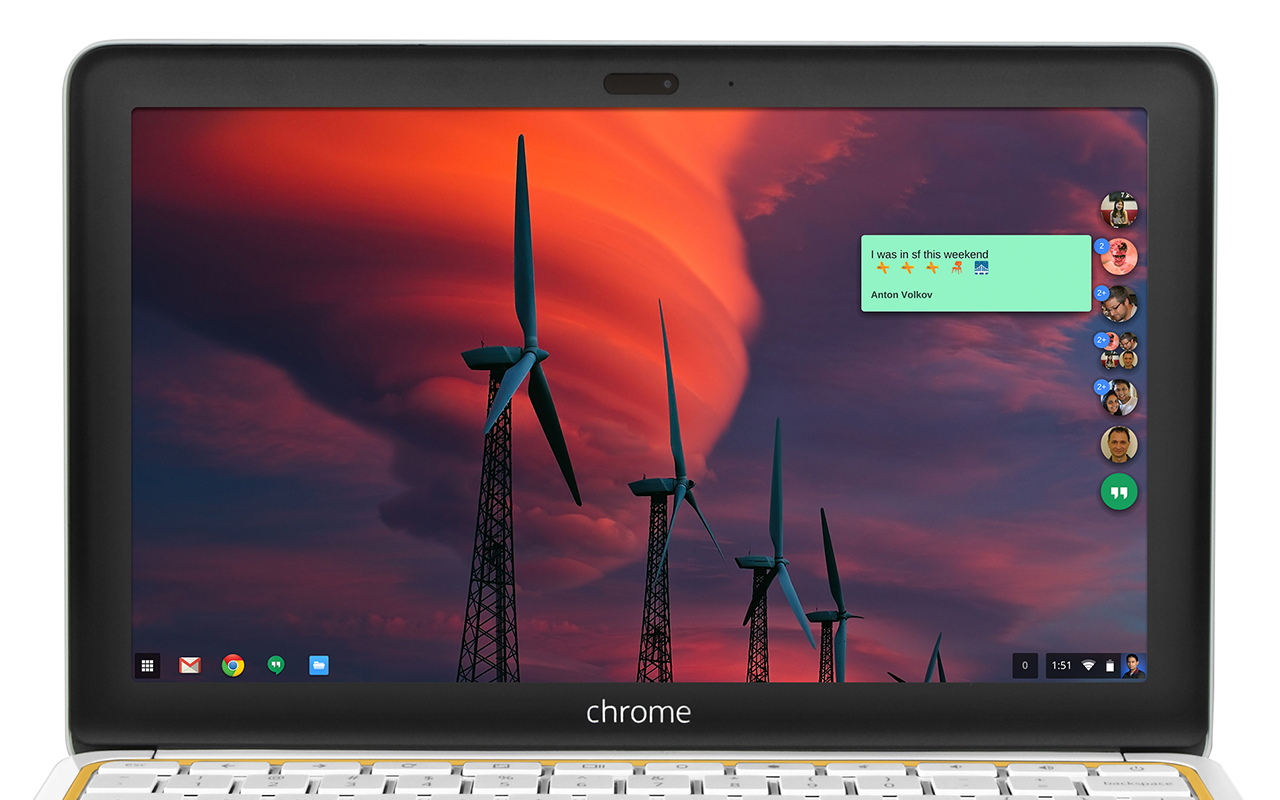

|

| News articles in different annotation conditions |

The first experiment looked at how people use annotations when the content they see is not personalized, and the annotations are not from people in their social network, as is the case when a user is not signed into a particular social network. Participants who signed up for the study were suggested the same set of news articles via annotations from strangers, a computer agent, and a fictional branded company. Additionally, they were told whether or not other participants in the experiment would see their name displayed next to articles they read (i.e. “Recorded” or “Not Recorded”).

Surprisingly, annotations by unknown companies and computers were significantly more persuasive than those by strangers in this “signed-out” context. This result implies the potential power of suggestion offered by annotations, even when they’re conferred by brands or recommendation algorithms previously unknown to the users, and that annotations by computers and companies may be valuable in a signed-out context. Furthermore, the experiment showed that with “recording” on, the overall number of articles clicked decreased compared to participants without “recording,” regardless of the type of annotation, suggesting that subjects were cognizant of how they appear to other users in social reading apps.

If annotations by strangers is not as persuasive as those by computers or brands, as the first experiment showed, what about the effects of friend annotations? The second experiment examined the signed-in experience (with Googlers as subjects) and how they reacted to social annotations from friends, investigating whether personalized endorsements help people discover and select what might be more interesting content.

Perhaps not entirely surprising, results showed that friend annotations are persuasive and improve user satisfaction of news article selections. What’s interesting is that, in post-experiment interviews, we found that annotations influenced whether participants read articles primarily in three cases: first, when the annotator was above a threshold of social closeness; second, when the annotator had subject expertise related to the news article; and third, when the annotation provided additional context to the recommended article. This suggests that social context and personalized annotation work together to improve user experience overall.

Some questions for future research include whether or not highlighting expertise in annotations help, if the threshold for social proximity can be algorithmically determined, and if aggregating annotations (e.g. “110 people liked this”) help increases engagement. We look forward to further research that enable social recommenders to offer appropriate explanations for why users should pay attention, and reveal more nuances based on the presentation of annotations.

.png)

.png)